A view from the trenches: from "Google for the Enterprise" in the early 2000s to RAG and generative synthesis — and the model that finally changed the game.

In the early 2000s, I was the enterprise architect for search at a major pharmaceutical company. The mandate was simple to state and impossible to execute: build "Google for the Enterprise." Make every document, every report, every row in every Oracle database queryable in plain English. A scientist should be able to ask a question the way they would ask a colleague, and the system should bring back the right answer.

We made it work, sort of. We crawled file shares, SharePoint sites, lab notebooks, regulatory submissions, and structured data from Oracle. We built taxonomies, controlled vocabularies, federated indexes. We invested in the best commercial search engines of the era. And on a good day, a chemist could type a few keywords and get back a reasonable list of documents to wade through.

On a bad day, the system would helpfully retrieve a clinical-trial protocol when the scientist was asking about a synthesis protocol. Or a manufacturing protocol when they wanted a drug-research protocol. Same word, completely different meaning, and the search engine had no idea.

The word "protocol" meant five different things to five different scientists. Our search engine treated all five as the same string of characters.

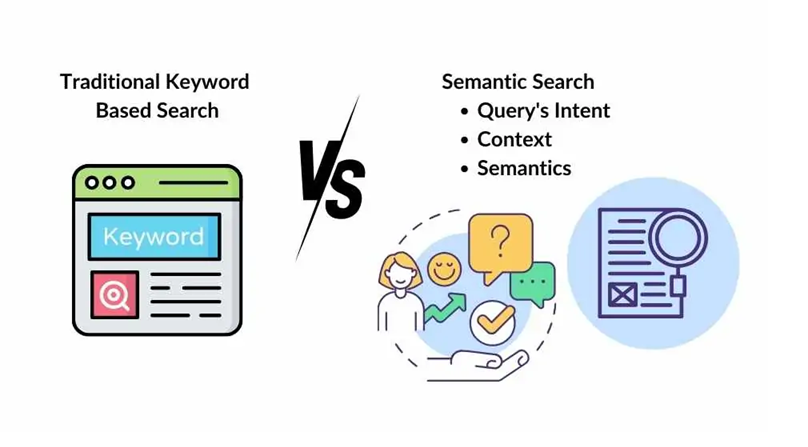

That was the protocol problem — and it was a stand-in for a much larger problem. Enterprise search, in the keyword era, was very good at matching strings and very bad at understanding meaning. Twenty years later, that gap has finally closed. This is the story of how it closed, what changed, and what it means for the way we work now.

• • •

The Keyword Era and Its Limits

The mathematical foundations of keyword search were laid down in the 1970s and 1980s: the inverted index, TF-IDF scoring, Boolean operators, stemming, stopword lists. By the time I was working in pharma search, these techniques were mature and well-tooled. Lucene was already an open-source standard. Commercial engines — Verity, FAST, Autonomy, Endeca — layered concept extraction, taxonomies, and federation on top.

What none of them could do was understand meaning. The search engine saw "protocol" as a token. It did not know that in a phase-II trial document the word referred to an enrollment plan, while in a medicinal-chemistry report it referred to a reaction sequence. We tried to bridge the gap with controlled vocabularies, ontologies, synonym rings, and faceted classification. Each of those helped at the margins. None of them solved the underlying problem, which was that strings are not meanings.

The other limit was synthesis. A search engine, by definition, returns a list. A scientist looking for "what do we know about CYP3A4 inhibition by compound X" would get back fifty documents and have to read all of them. The tsunami of results was the deliverable. Turning that tsunami into a usable research work product was the scientist's job, and it consumed enormous amounts of time.

The Slow Climb: Three Decades of Incremental Wins

Semantic search did not appear overnight. The transformer was the breakthrough, but it sat on top of a long string of incremental innovations — some from information retrieval, some from machine learning, some from neural language modeling. Here is the lineage as I read it, working backward from where we are now:

1990 — Latent Semantic Indexing (LSI/LSA)

The first whisper that meaning could be a vector.

Deerwester and colleagues used singular value decomposition on term-document matrices to project documents into a lower-dimensional "concept" space. Documents that shared no exact words but shared themes ended up near each other. It was slow, brittle on large corpora, and rarely beat tuned keyword search in practice — but the idea that meaning is geometric, not lexical, was planted.

1998 — PageRank

Authority as a ranking signal.

Not a semantic technique, strictly speaking, but PageRank made web search usable by promoting high-authority pages over keyword-stuffed ones. It set the expectation that ranking quality matters as much as recall.

1999–2003 — Topic Models (pLSA, LDA)

Documents as mixtures of topics.

Hofmann's probabilistic LSA and Blei's Latent Dirichlet Allocation gave us a probabilistic way to discover latent topics in a corpus. We used LDA in pharma to cluster regulatory documents by therapeutic area. It worked, but the topics were unstable, hard to label, and the model knew nothing about word order.

2013 — Word2Vec

Words become dense vectors with arithmetic.

Mikolov's team at Google trained shallow neural networks to predict words from their neighbors. The result was a vector for every word, where geometric distance reflected semantic similarity. The famous example — king − man + woman ≈ queen — made it suddenly obvious that meaning had a math. But word2vec gave each word a single vector. "Protocol" still got one embedding regardless of context.

2014–2015 — Sequence-to-Sequence with Attention

A neural network learns to focus.

Bahdanau, Cho, and Bengio introduced attention as a soft alignment mechanism for machine translation. Instead of compressing a sentence into a fixed vector, the decoder could "look back" at any input word as it generated each output word. Attention was the conceptual seed that the transformer would later make the entire architecture.

2018 — ELMo

Embeddings that change with context.

ELMo used a bidirectional LSTM to produce contextual word embeddings — "protocol" in a clinical trial sentence got a different vector than "protocol" in a chemistry sentence. Suddenly the protocol problem was tractable. ELMo was a strong signal that contextual representation, not static embeddings, was the path forward.

2017 — "Attention Is All You Need"

The transformer.

Vaswani and colleagues threw out the recurrence and the convolutions and built an architecture out of pure attention. Self-attention let every token in a sequence look at every other token in parallel. The model was faster to train, scaled to vastly larger corpora, and captured long-range dependencies that LSTMs struggled with.

2018–2019 — BERT and Friends

Pretrain once, fine-tune everywhere.

BERT pretrained a transformer on enormous text corpora using masked-language modeling, then fine-tuned for specific tasks. Google integrated BERT into its production search ranker in 2019 — the first time semantic understanding measurably moved the needle for everyday queries. Sentence-BERT and Dense Passage Retrieval followed, turning transformers into practical retrieval engines.

2020 onward — RAG and the LLM Era

From retrieval to synthesis.

Lewis and colleagues at Facebook AI formalized Retrieval-Augmented Generation in 2020. By the time GPT-3.5 and GPT-4 arrived, the full stack was in place: dense embeddings for retrieval, vector databases for scale, and a generative model that could read the retrieved chunks and synthesize a coherent answer.

Looking at this lineage end to end, the transformer is the inflection point but not the entire story. It is the moment when three separate threads — geometric representations of meaning, contextual embeddings, and attention-based architectures — converged into a single, scalable, trainable model. Everything before 2017 was setup. Everything after has been amplification.

• • •

What the Transformer Actually Changed

Plenty has been written about transformers as a deep-learning architecture. What I want to call out is what specifically changed for semantic search, because that is the part that touches everyone who works with enterprise data.

Self-attention captured context, in both directions, across long distances. Earlier neural models read text left to right, lost information across long sequences, and struggled to relate words that were far apart. A transformer reads every position in parallel and lets each token attend to every other token. The word "protocol" in a sentence about patient enrollment gets a vector shaped by "patient," "enrollment," "screening," and "consent." The same word in a sentence about a synthesis pathway gets a different vector, shaped by "reagent," "yield," "step," and "purification." For the first time, the protocol problem dissolved by construction.

Pretraining decoupled language understanding from task-specific labels. The earlier era of search needed armies of annotators to label training data for every domain. Transformers learn language from raw text at scale, then a small amount of task-specific fine-tuning specializes them. This is why an embedding model trained on the open web can be dropped into pharma, finance, or legal and still produce useful semantic vectors out of the box.

Vectors became the universal interchange format. Once every chunk of text could be turned into a dense vector that captured its meaning, retrieval became a nearest-neighbor problem. Vector databases — Oracle 26ai's AI Vector Search, Pinecone, pgvector, and the rest — productized that nearest-neighbor search at scale. The "protocol" problem at retrieval time was now: which chunk's vector is closest to the query's vector, in a space where closeness means similarity of meaning?

Generation closed the loop. Decoder-only transformers could not just retrieve relevant text — they could read it and synthesize. The deliverable changed from a list of documents to a paragraph of analysis. Twenty years of pharma scientists triaging tsunamis of search results were suddenly looking at a different workflow.

Why This Worked at Scale

Three engineering realities made transformers practical, not just theoretically interesting. GPUs and TPUs made the parallelism in self-attention economically tractable to train. The internet provided the trillion-token text corpora needed to pretrain models large enough to generalize. And open-source releases — BERT, T5, LLaMA, sentence-transformers — meant every enterprise could adopt the technology without rebuilding it from scratch. The science was necessary; the infrastructure made it inevitable.

From Search to Synthesis

The shift I find most consequential is not about better ranking. It is about what the user does after the search returns. In the keyword era, the search engine handed the scientist a stack of documents and the synthesis was their job. With RAG and generative AI, the synthesis is part of the response.

A scientist asking "what do we know about CYP3A4 inhibition by compound X" no longer gets fifty documents. They get a synthesized answer: a paragraph that integrates findings from those fifty documents, with citations back to the source text. They can drill in to verify, but they do not have to read all fifty to get the gist. The work product is closer to what a colleague would have given them after a day of reading.

For twenty years the search engine returned a problem — a list. Now it returns a draft of the answer. That is a step-change innovation, not an incremental one.

This is the difference that puts semantic understanding into the hands of the masses. A non-specialist user does not need to learn query syntax, choose facets, or construct Boolean expressions. They ask a question in plain language and get an answer in plain language. The barrier to value collapsed.

• • •

The Two-Edged Sword

I want to be honest about the other side of this. The early days of generative AI in my own working domain — Oracle database performance — were a mixed bag. I spend a lot of time analyzing metrics from Oracle's Automatic Workload Repository (AWR), and I would routinely ask early LLMs to write SQL against AWR views. They would happily generate confident, well-structured queries against tables that did not exist. DBA_HIST_SOMETHING with three columns the LLM had invented. Fluent, plausible, and wrong.

At the same time, the same LLMs would do things that genuinely amazed me. AWR has tens of thousands of distinct performance metrics, and one of the things I do is organize them into taxonomies — by infrastructure category (CPU, memory, I/O, network, locking, parsing) so I can reason about them at the right level of abstraction. I once fed a model several hundred AWR metric names and asked it to propose an infrastructure taxonomy. The result was excellent. Better than what I would have produced in a comparable amount of time. The model had seen enough Oracle internals on the open web to do real synthesis on a domain that requires deep expertise.

Both experiences are real. Both are characteristic of where this technology is. And the difference between them — between the hallucinated table names and the genuinely good taxonomy — was almost entirely my ability to evaluate the output. I knew the AWR schema cold, so the fake tables were obvious. I knew enough about infrastructure categories to recognize a good taxonomy when I saw one. A user without that domain knowledge could have been led down a wrong path in either direction.

Where LLMs Fall Short

· Confidently invent specific facts — table names, function signatures, citations

· Smooth over the seams between truth and confabulation

· Get worse at the boundaries of their training data, with no warning

· Reward fluent answers over accurate ones unless you press

Where LLMs Are Astonishing

· Pattern-match across vast prior text to propose taxonomies, structures, frameworks

· Synthesize a coherent narrative from unstructured inputs

· Translate between domains and audiences in seconds

· Generate code, plans, and drafts you can edit faster than you could write

This is my soap-box take, and I do not think it gets stated often enough: using an LLM to learn a subject you have no way to evaluate is genuinely dangerous. You will not know which parts are right. The fluency is not a signal of accuracy — it is a feature of the model. You need either some domain knowledge, a way to validate against authoritative sources, or both. Otherwise you are accepting confident output on faith, and that is a bad bet.

The good news is that the models keep getting better. The hallucinations on Oracle internals that were common a few years ago are dramatically rarer now. RAG architectures, where the model is grounded in retrieved authoritative content, reduce the failure mode further. Tool-calling models that can actually run a query against AWR, rather than guess what the schema looks like, close the loop in a different way. We are not at the end of this curve, but we are visibly further along it than we were even twelve months ago.

What This Means for the Way We Work

Twenty years on from "Google for the Enterprise," the technology has finally caught up to the ambition. Semantic search works. Synthesis is part of the response. Domain-aware models are within reach of any enterprise willing to do the engineering. The protocol problem is solved — not by smarter ontologies, but by models that learn what words mean from how they are used.

The right posture, in my view, is the one I keep coming back to: work faster, work smarter, trust but verify. Use these tools to learn, but bring — or build — the domain knowledge needed to evaluate the answers. Use them to draft taxonomies, project plans, code, analyses, … . Edit like crazy. Push back on the outputs. Treat the model as a fast, capable, occasionally overconfident collaborator, not as an oracle.

The scientists I worked with in the early 2000s would not believe what an enterprise search box can do in 2026. The protocol problem — the one I lost sleep over for years — is no longer the hard part. The hard part now is making sure the humans staying in the loop know enough to keep the answers honest. That is a much better problem to have.